NVIDIA and Continuum Analytics Announce NumbaPro, A Python CUDA Compiler

by Ryan Smith on March 18, 2013 9:00 AM ESTAs NVIDIA’s GPU Technology Conference 2013 kicks off this week, there will be a number of announcements coming down the pipeline from NVIDIA and their partners. The biggest and more important of these announcements will be Tuesday morning with NVIDIA CEO’s Jen-Hsun Huang’s keynote speech, while some other product announcements such as this one are being released today with the start of the show.

Starting things off is news from NVIDIA and Continuum Analytics, who are announcing that they are bringing Python support to CUDA. Specifically, Continuum Analytics’ will be introducing a new Python CUDA compiler, NumbaPro, for their high performance Python suite, Anaconda Accelerate. With the release of NumbaPro, Python with be joining C, C++, and Fortran (via PGI) as the 4th major CUDA language.

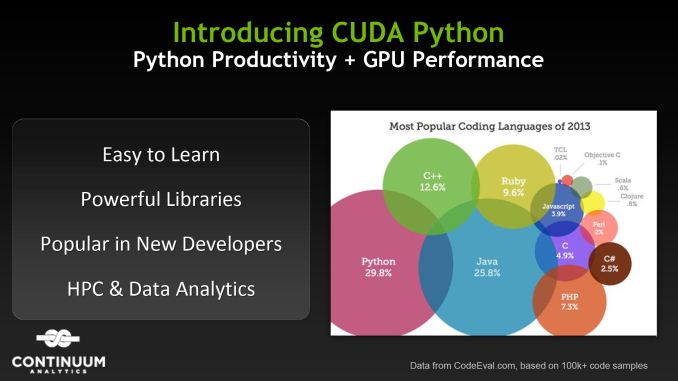

For NVIDIA of course the addition of Python is a big deal for them by opening the door to another substantial subset of programmers. Python is used in several different areas; though perhaps most widely known as an easy to learn, dynamically typed language common in scripting and prototyping, it’s also used professionally in fields such as engineering and “big data” analytics, the latter of which is where Continuum’s specific market comes in to play. For NVIDIA this brings with it both the benefit of making CUDA more accessible due to Python’s reputation for simplicity, and at the same time opening the door to new HPC industries.

Of course this is very much a numbers game for NVIDIA. Python has been one of the more widely used programming languages for a number of years now – though by quite how much depends on who’s running the survey – so after getting C++ under their belts it’s a logical language for NVIDIA to focus on to quickly grow their developer base. At the same time Python has a much larger industry presence than something like Fortran, so it’s also an opportunity for NVIDIA to further grow beyond academia and into industry.

Meanwhile, though NumbaPro can’t claim to be the first such Python CUDA compiler – other projects such as PyCUDA have come first – Continuum’s Python compiler is setup to become the all but defacto Python implementation for CUDA. Like The Portland Group’s Fortran compiler, NVIDIA has singled out NumbaPro for a special place in their ecosystem, effectively adopting it as a 2nd party CUDA compiler. So while Python isn’t a supported language in the base CUDA SDK, NVIDIA considers it a principle CUDA language through the use of NumbaPro.

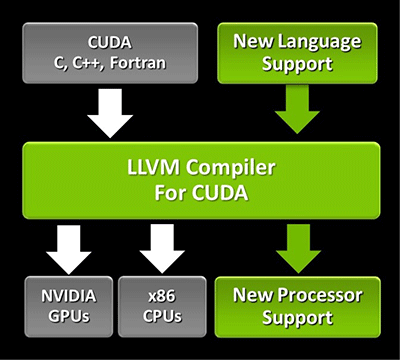

Finally, NVIDIA is also using NumbaPro to tout the success of their 2011 CUDA LLVM initiative. One of the goals of bringing CUDA support to LLVM was to make it easier to add support for new programming languages to CUDA, which in this case is exactly what Continuum has used to build their Python CUDA compiler. NVIDIA’s long term goal remains to bring more languages (and thereby more developers) to CUDA, and being able to discuss success stories involving their LLVM compiler is a big part of accomplishing that.

10 Comments

View All Comments

Gnarr - Monday, March 18, 2013 - link

Python the most popular.. since when?http://www.langpop.com/

aterrel - Monday, March 18, 2013 - link

Doesn't say the most popular language, just widely used. C/C++ is already covered and in scientific computing Python is definitely more popular than Java. The other languages above Python are not in the space of systems or graphics programming so I think the claim is pretty justified.danielkza - Monday, March 18, 2013 - link

'One of the more widely used' is not equivalent to 'the most popular'. At no point in the article the latter was implied.Death666Angel - Monday, March 18, 2013 - link

The graphic says exactly that, though."Most Popular Coding languages of 2013" -> Python leads with 29.8%.

REALfreaky - Monday, March 18, 2013 - link

B-b-but it isn't FOSS... why would they pick NumbaPro over PyCUDA?aterrel - Monday, March 18, 2013 - link

There are lots of python + cuda things, PyCUDA, Theano, Copperhead, and so on. But it looks as if the plan is to make NumbaPro a FOSS product in the future http://continuum.io/selling-open-source.htmltravisoliphant - Monday, March 18, 2013 - link

PyCUDA requires writting kernels in C/C++. It only uses Python to script or "steer" what is ultimately a C/C++ CUDA build.CUDA Python is a direct Python to PTX compiler so that kernels are written in Python with no C or C++ syntax to learn. CUDA Python also includes support (in Python) for advanced CUDA concepts such as syncthreads and shared memory.

We participate extensively in FOSS at Continuum Analytics. Our principals and employees have written a great deal of code that is FOSS in the Scientific Python stack (NumPy, SciPy, Numba, Bokeh, Blaze, Chaco, PyTables, DyND, etc.). Maintaining this requires funding. We also value the coordinating mechanism of the market to allow those who can use the software to pay for it's development. See our philosophy here: http://www.continuum.io/selling-open-source.html

You can also use NumbaPro for free by using our online analytics environment Wakari (www.wakari.io) and using the soon to be released GPU queue.

GoVirtual - Monday, March 18, 2013 - link

Very interesting. The million dollar question is:Does CUDA Python support arbitrary precision math types like Python does natively?

paddy_mullen - Monday, March 18, 2013 - link

Python along with NumPy and SciPy have been used for numerical coding applications for years. NumbaPro allows users to make NumPy code even faster. Here are some links for further information:http://en.wikipedia.org/wiki/NumPy

http://continuum.io/blog/simple-wave-simulation-wi...

http://continuum.io/blog/the-python-and-the-compli...

tanders12 - Monday, March 18, 2013 - link

Coming on the heels of Travis' Numba talk at PyCon Saturday this is very exciting news. Congrats to Continuum on getting recognition for the awesome work you've done on this.