Micron's P320h: A Custom Controller Native PCIe SSD in 350/700GB Capacities

by Anand Lal Shimpi on June 2, 2011 12:01 AM ESTSSDs are beginning to challenge conventional drive form factors in a major way. On the consumer side we're seeing more systems use new form factors for SSDs, enabled by mSATA. The gumstick form factor used in the MacBook Air and ASUS UX Series comes to mind. SSDs can offer performance in a smaller package, thus helping scale down the size of notebooks.

The 11-inch MacBook Air SSD, courtesy of iFixit

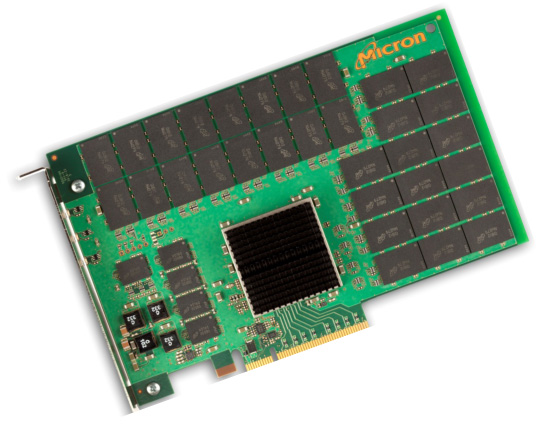

The enterprise market has seen a form factor transition of its own. While 2.5" SSDs are still immensely common, there's a lot of interest in PCIe solutions.

The quick and easy way to get a PCIe SSD is to take a bunch of SSDs and RAID them together on a single PCIe card. You don't really get a performance benefit, but it does help you get a lot of performance without being drive-bay limited. This is what we typically see from companies like OCZ.

The other alternative is a native PCIe solution. In the aforementioned example, you typically have a couple of SATA SSD controllers paired with a SATA to PCIe RAID controller. With a native solution you'd skip the RAID controller entirely and just have a custom SSD controller that interfaces directly to PCIe. A native PCIe SSD is just an SSD that avoids SATA entirely, thus avoiding any potential bottlenecks. Today Micron is announcing its first native PCIe SSD: the P320h.

The P320h is Micron's first PCIe SSD as well as its first in-house controller design. You'll remember from our C300/C400/m4 reviews that Micron typically buys its controllers from Marvell and simply does firmware development in house. The P320h changes that. While it's too early to assume that we'll see Micron designed controllers for consumer drives as well, clearly that's a step the company is willing to take.

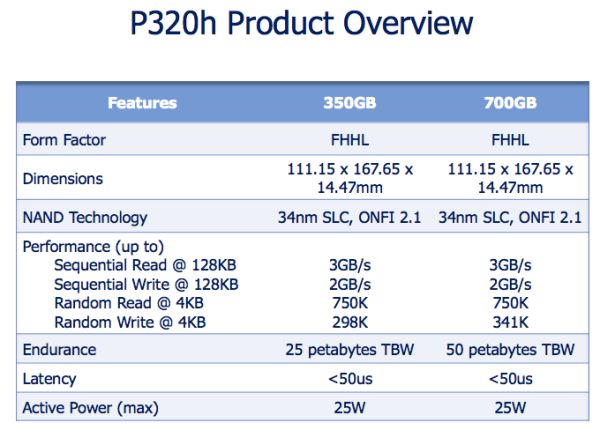

The P320h's controller is a beast. With 32 parallel channels and a PCIe gen 2 x8 interface, the P320h is built for bandwidth. Micron's peak performance specs speak for themselves:

Sequential read/write performance is up to 3GB/s and 2GB/s respectively. Random 4KB read performance is up at a staggering 750,000 IOPS, while random write speed peaks at 341,000 IOPS. The former is unmatched by anything I've seen on a single card, while the latter is a number that OCZ's recently announced Z-Drive R4 88 is promising as well. Note that these aren't steady state numbers nor are the details of the testing methodology known so believe accordingly.

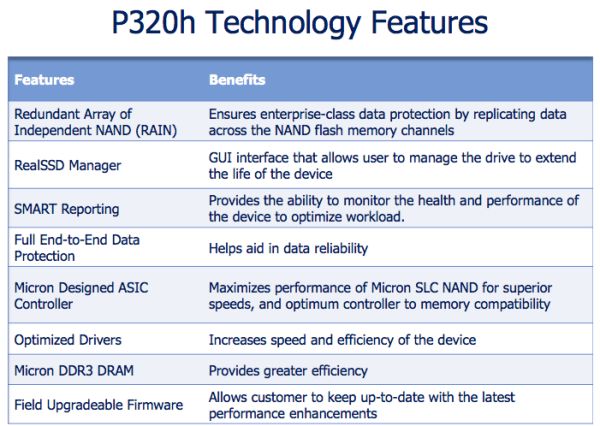

There is of course support for NAND redundancy, which Micron calls RAIN (Redundant Array of Independent NAND). Micron calls RAIN very similar to RAID-7 with 1 parity channel, however it didn't release information as to what sorts of failures are recoverable as a result. RAIN in addition to typical enterprise level write amplification concerns result in a some pretty heavy overprovisioning on the drive as you'll see below.

Micron will offer the P320h in two capacities: 350GB and 700GB. The drives use 16Gbit 34nm SLC NAND (ONFI 2.1). The 700GB drive features 64 package placements with 8 die per package - that works out to be 16GB per die, or 1TB of NAND on the card.

The 350GB version has the same number of package placements (64) but it only has 4 die per package, which works out to be 512GB of NAND on board. Obviously with twice as many die per package there are some interleaving benefits which result in better 4KB random write performance.

Pricing is unknown at this point, although Micron pointed out that it is expecting cost to be somewhere south of $16 per GB (at $16/GB that would be $5600 for the 350GB board and $11,200 for the 700GB board).

22 Comments

View All Comments

davegraham - Thursday, June 2, 2011 - link

http://fusionio.com/products/iodriveoctal/ <--Fusion I/O IODrive Octal handily beats the specs posted by Micron. I believe even the recently announced TMS PCIe solution has it's nose up in the rarified air of enterprise class PCIe solutions. ;)kudos to Micron for starting the journey...they've got a LONG road ahead to prove themselves.

cheers,

Dave

GullLars - Thursday, June 2, 2011 - link

This is what i also thought of when i read "Sequential read/write performance is up to 3GB/s and 2GB/s respectively. Random 4KB read performance is up at a staggering 750,000 IOPS, while random write speed peaks at 341,000 IOPS. The former is unmatched by anything I've seen on a single card, while the latter is a number that OCZ's recently announced Z-Drive R4 88 is promising as well. Note that these aren't steady state numbers nor are the details of the testing methodology known so believe accordingly."ioDrives have been the benchmark to beat, and it doesn't seem like P320h can beat it. ioDrives also scale from 1 to 8 controllers on the same card in a cluster type setup, and come with support for Infiniband for direct linking outside the host system.

TMS has both PCIe Flash and RAM sollutions that can beat this.

The question is really, will this be able to compete in it's price range on a TCO vs QOS basis. $5600 is more in the ioDriveDuo range.

engineer7 - Saturday, October 29, 2011 - link

I think you mean "kudos to micron they've blown up the competition". At least for the range that this card is marketing. If you read the specs a little more close you will see that the IO fusion drive is a 150 watt,double width full length card. P320h says 25w Max in this review. That's a huge difference.The octal is about $5000 more according to a quick Google search. So this is really an apples to oranges comparison.

Watt for watt the p320h wins hands down. It is also a single wide card. I suspect this will be very desirable for enterprise/server market. I also saw on the register that they have a hhhl card too...

FTW

blanarahul - Wednesday, December 21, 2011 - link

Mind you the Octal is an 8, yes EIGHT ioDrives in RAID 0vol7ron - Thursday, June 2, 2011 - link

I like the idea of this (and the size), of course that pricing is ridiculous.Assuming pricing will come down, I'm a bit skeptical to put my longterm storage near, or right next to my dedicated GPU. I'm concerned about the heat implications and the longterm effects it will have on longevity.

For gaming systems, GPUs are getting hotter and hotter, I'm not sure passive cooling will suffice for these PCIe SSDs

jcollett - Thursday, June 2, 2011 - link

Why on earth would you think this was for your "gaming" system? Loaded to the hilt with SLC NAND, this is for highly accessible databases in an enterprise environment. When your computers are there to make you money, the cost doesn't looks so bad at all.mckirkus - Thursday, June 2, 2011 - link

Agree, getting a $300 Vertex 3 SSD would probably give you almost indistinguishable results (level load times) and for $5k less. Also, SSDs are much better able to deal with heat than spinning disks.Regarding gaming, if some day games would use hard drives for more than just an installation location this could prove interesting, but PC games aren't even using 4GB of RAM yet.

vol7ron - Thursday, June 2, 2011 - link

You guys are looking at today and not the big-picture, down-the-road impact. Don't be so short-sighted and nit-pick my word choice - it's a comment, not an article ;)Sure it's SLCs today, but it'll be MLCs tomorrow - perhaps with 3D-engineered hardware. Down the road, they might not use passive cooling, but still the PCIe slots are near where a lot of the heat is generated, so even without the dedicated card, I'm curious about the longterm effects.

Back to your response: Gaming systems tend to be used for more than just gaming, at least for the majority of gamers - their machines are more high-end all-purpose systems. Sure, dedicated pro-gramers have their specific setups and boxes used solely, for gaming, but I was generalizing. Teens, and amateur-gamers are not going to spend the $$ on multiple computers, one to crunch numbers and one to play online. That being said, 4GB is not enough on a 64b system, with background virus/anti-cheat running, while recording demos. And if it isn't a serious gamer, they'll be having their desktop widgets and internet browsers open, possibly even streaming TV if they have a decent system.

To counter your point, for those enterprise systems, it'd be more cost-effective to use the non-PCI alternatives. And if it is an enterprise system, I question the number of PCIe slots available that would allow for RAIDing, or even if that's possible using this setup, as it might be software-driven.

That being said, this alternative is great for one thing not mentioned above. Many times companies are locked into contracts to buy specific parts from certain vendors. These contracts allow those vendors to bump the prices astronomically. However, having this alternative provides for loopholes in said contracts, which even through it may be $5.6k for 350GB, that beats the $1k-$2.5k for 100GB SCSI contracts floating around.

Zap - Thursday, June 2, 2011 - link

Wow, this makes the OCZ drives look quite affordable.I wonder if anyone won the $200-million Powerball lottery last night? If I won that...

peternelson - Thursday, June 2, 2011 - link

Anand, you're likely aware of cooperation among many leading companies to develop improved standards for this type of product (rather than the sata-raid onboard approach).I'm thinking of:

http://www.intel.com/standards/nvmhci/

When you mention, preview or review such products, if possible establish and tell us if the hardware conforms to the new nvmhci standards which should among other things improve driver support etc.

Thanks!