NVIDIA Announces Parallel Nsight 1.5 & CUDA Toolkit 3.2

by Ryan Smith on September 14, 2010 9:00 AM ESTNot to be outdone by Intel’s IDF and AMD’s counter-meeting this week, NVIDIA’s GPU Computing group has their own announcement this week ahead of their GPU Technology Conference next week.

Next week NVIDIA will be releasing the first major update to their GPGPU programming toolchain since the Fermi-based Tesla series launched earlier this year. Specifically, they will be releasing Parallel Nsight 1.5, and version 3.2 of the CUDA Toolkit.

Parallel Nsight 1.5

As we’ve reiterated a number of times now, NVIDIA’s long-term goals require the company to expand their GPU market beyond video cards and in to the High Performance Computing (HPC) space, where the brute force applied by GPUs for gaming purposes can be applied to academic, industrial, and even consumer computing applications. The Fermi architecture was a big step towards this goal, providing a GPU much better suited for GPU computing than the previous GT200/G80 thanks in large part to the GPU’s unified address space, ECC support, and support for C++. With the Fermi architecture NVIDIA would have the hardware necessary to take the next step in to GPU computing.

However good hardware requires good software, and that’s where our discussion is going today. With support for higher level languages comes the need for better programming tools and better debugging tools. Furthermore NVIDIA ultimately wants to extend practical GPU programming to the more rank & file programmers, where Microsoft’s Visual Studio is by far and wide the IDE of choice for C and C++. Reaching these programmers would require extending CUDA programming to the IDE and toolsets they already use, and in the process expand the market by making development more accessible than it was with older toolsets.

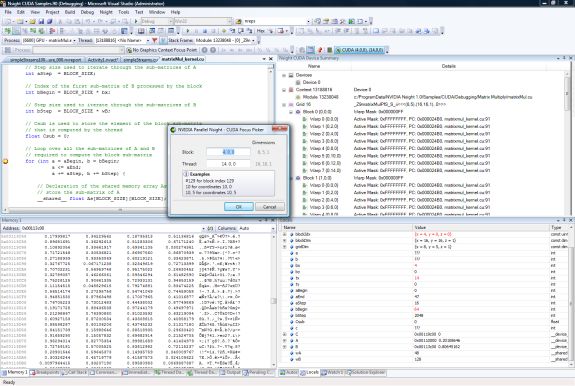

VS2008 with Parallel Nsight 1.0

In order to accomplish this goal, NVIDIA wrote a plugin for Visual Studio to enable the complete programming and debugging of CUDA and graphics code within Visual Studio – something that wasn’t previously possible – called Parallel Nsight. We first saw Parallel Nsight last year when Fermi was introduced under the name Nexus, and it finally shipped a few months ago along-side the first Fermi based Tesla cards. Since then roughly 8,000 developers have signed up to use the plugin.

On Wednesday the 22nd NVIDIA will be releasing the first major update to Parallel Nsight with release 1.5 of the plugin. Headlining this launch is the inclusion of Visual Studio 2010 support, bringing Parallel Nsight up to date with Microsoft’s IDE. Previously Parallel Nsight only supported VS 2008, which left Parallel Nsight behind as companies and developers began switching to Visual Studio 2010.

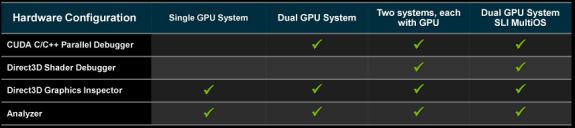

On the backend of things, Parallel Nsight 1.5 will be expanding the debugging capabilities of the plugin, particularly on single-system scenarios. Because the GPU needs to maintain the compute or graphics context being debugged, the only way to do debugging was to have a second system or a virtualized system to work with – one system to actually run the code, and a second system to debug from. While this is still (and likely always will be) the preferred method of debugging, it was impractical for single developers and smaller shops that couldn’t dedicate a second machine to the task.

For those programmers, Parallel Nsight 1.5 will be adding support for debugging compute code within a single system when multiple GPUs are present. This allows one GPU to run the application, and then a second GPU to actually drive the computer’s display. In this case the GPUs don’t even need to match so long as it's an NVIDIA GPU, allowing developers to use a high-end GPU for programming purposes while using a lesser GPU to drive the display, effectively halving the amount of hardware required for debugging. However at this time this single-system debugging support only applies to compute; shader debugging still requires a second system.

Parallel Nsight Debugging Configurations

The second big feature coming to Parallel Nsight 1.5 will be the addition of support for debugging code on a GPU running in Tesla Compute Cluster (TCC) mode. Since we don’t regularly cover Tesla news we haven’t had the opportunity to discuss TCC before, but in a nutshell TCC is a separate driver mode for Tesla cards that bypasses Windows’ control of a GPU through WDDM. WDDM, which was the new GPU driver model released with Windows Vista, was geared towards better integrating a GPU in to the OS by giving the OS more direct control of the GPU. The OS provided a virtual address space for video memory (including paging), recovery capabilities if the GPU hung, and enforced a coarse level of task scheduling on the GPU so that multiple processes could share a GPU without unnecessarily dragging a system down.

Compared to the old XPDM, WDDM was a big step up for GPU usage on Windows, but only for graphical purposes. With Windows’ iron-fisted control over the GPU and a focus on task scheduling for responsiveness over performance, it wasn’t ideal for GPGPU purposes. Case in point, with a WDDM driver NVIDIA was finding it took 30μs for a kernel to be launched, but if they had Windows treat the GPU as a generic device by using a Windows Driver Model (WDM) driver, that launch time dropped to 2.5μs. This coupled with the fact that a WDM driver is necessary to use Tesla cards in a Windows Remote Desktop Protocol environment (as any Folding @Home junkie can tell you, RDP sessions can’t access the GPU through WDDM) resulted in the birth of TCC mode.

Previously GPUs running in TCC modecould run applications, but the system functioned somewhat in a black box manner. In order to debug that code the GPU would need to be running with a regular WDDM driver, which had a performance impact and also meant it wasn’t possible to do this debugging in conjunction with an RDP session. With Parallel Nsight 1.5, it’s now possible to debug code on a GPU running in TCC mode, finally allowing developers to debug code against the same driver that the production version of the program would be running on.

The third major addition is support for profiling DirectCompute code when it’s used in conjunction with Direct3D. This is primarily a feature for game developers, who previously did not have an effective way to profile the performance of DirectCompute code in their games. DirectCompute has been underutilized in games so far, so perhaps this will expand its use.

Last but not least, Parallel Nsight 1.5 is also adding support for the new 3.2 version of the CUDA Toolkit

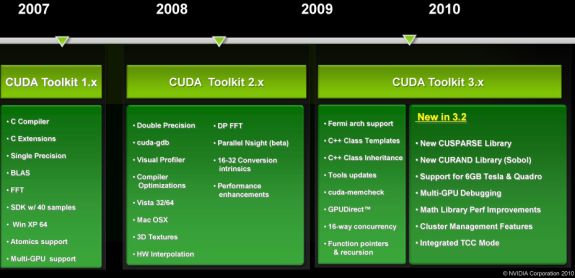

CUDA Toolkit 3.2

The CUDA toolkit is the agglomeration of all of NVIDIA’s programming tools: compilers, drivers, and libraries. Version 3.0 of the toolkit added support for the Fermi architecture and its unique features like C++, and version 3.2 will be the first major expansion of the toolkit since then.

A big focus for 3.2 is going to be on libraries. Libraries are a critical component for efficient GPU and CPU programming, as they provide hand-optimized implementations of common functions. Generally speaking, if you can hand something off to a library you can speed up that portion of the task significantly, and if the library can find a way to do something faster than before then it will also speed things up. For 3.2 NVIDIA is adding libraries for sparse matricies (CUSPARSE), H.264 encoding, and random number generation (CURAND), while also improving the performance of the existing math libraries such as the FFT and BLAS libraries.

The other big change will be the full realization of the 64bit address space of Fermi family Tesla cards. The hardware has been capable of addressing better than 32bits (4GB) of memory, but the software had not fully caught up. With the impending release of 2Gb GDDR5 RAM, 24 chip 6GB Tesla cards will be made possible, requiring the software to catch up. As of version 3.2 the toolkit (and Parallel Nsight) will be able to address these cards.

The 3.2 version of the toolkit largely goes hand-in-hand with Parallel Nsight 1.5, however at the moment it’s lagging behind Parallel Nsight by a bit. The release candidate of the toolkit will be ready shortly (likely at or before the Parallel Nsight 1.5 release) but the final version will not be ready until November.

All of these items will be on display next week, when NVIDIA hosts their annual GPU Technology Conference. We’ll be there for the later part of the conference to get a pulse on the state of GPGPU usage in consumer applications and to do some reconnaissance for future GPGPU articles, so stay tuned for our reporter’s notebook from GTC next week.

22 Comments

View All Comments

Soulkeeper - Tuesday, September 14, 2010 - link

Too bad they didn't focus on opencl instead.cuda needs to die already.

NaN42 - Tuesday, September 14, 2010 - link

Yeah, you're right. At my university some groups tried to use CUDA but encountered many problems. Perhaps the situation is better for Windows but OpenCL simply works on nvidia AND ati cards AND with Linux and most likely with Windows, too ;-).Lonbjerg - Tuesday, September 14, 2010 - link

Because OpenCL are way behind CUDA.Why should NVIDIA handicap them selfes just to "level the playing field"...make no sense what so ever.

raddude9 - Tuesday, September 14, 2010 - link

"Because OpenCL are way behind CUDA."Not an excuse.

OpenCL is managed by the Khronos Group which is open to submissions from any company, so Nvidia are free to enhance OpenCL with whatever CUDA-type features they like.

It does not matter what nifty features CUDA has, nobody is going to make a serious commitment to it unless it is cross-platform and Open.

Pirks - Wednesday, September 15, 2010 - link

It is a perfect excuse. MS used the same tactics to distinguish themselves. Why use "open" crap like Java when you can use much nicer proprietary .Net? Same with Apple, same logic - comfort wins. Why use crap "open" PC when more comfortable MacBooks, iPads and iPhones win although they are 100% proprietary (crap like darwin/webkit/etc doesn't help, don't even try to pull that BS here)I mean nice sweet comfortable quality products like DirectX/Mac/Windows/.Net always pwn open crap like Linux/Unix/Java/OpenGL, simply because they are way more user friendly. People don't care about open crap of religious freaks like RMS, they want nice stuff to work on, who cares if crap is free and nice stuff is not? This is how it's supposed to be in a market economy.

Same here with CUDA. nVidia wants CUDA to be nice sweet proprietary product. All the religious freaks keep sucking RMS and use "open" CL and "open" everything. Normal people who want the task done as quickly and swiftly as possible just pay up for proprietary nVidia/Windows/etc shit and do the job.

If you ever hear so called "scientists" complain about oh their sweet "open" Linux not working with CUDA - ignore then. Real scientists just use CUDA on Windows and get the job done. Fake ones keep complaining about Linux shminux etc, them losers just need another excuse to slack off.

UltraWide - Wednesday, September 15, 2010 - link

I agree 100%, there is the ideal world and the real world.Sahrin - Tuesday, September 14, 2010 - link

Because more open platform = more users = more profits?Lonbjerg - Wednesday, September 15, 2010 - link

That worked soooo goood for OpenGL right?sc3252 - Wednesday, September 15, 2010 - link

Yeah and don't forget 3DFX, oh wait... Also before you bring up the Bullshit about Opengl vs Direct X, Direct X is able to be implemented by anyone. It just so happens though that it is only usable on Windows.therealnickdanger - Tuesday, September 14, 2010 - link

I think that even NVIDIA knows that OpenCL is the future, but why not try to make as much money as possible with proprietary CUDA while they can? It's business, not charity. AFAIK, NVIDIA's cards also support OpenCL functions, so they can always fall back on it if (when) CUDA dies.Assuming that Microsoft doesn't take over with an API of their own at some point...