Intel Officially Reveals Post-8th Generation Core Architecture Code Name: Ice Lake, Built on 10nm+

by Ian Cutress on August 15, 2017 9:20 AM EST- Posted in

- CPUs

- Intel

- Core

- Coffee Lake

- Ice Lake

- Cannon Lake

In an unusual move for Intel, the chip giant has ever so slightly taken the wraps off of one of their future generation Core architectures. Basic information on the Ice Lake architecture has been published over on Intel's codename decoder, officially confirming for the first time the existence of the architecture and that it will be made on Intel's 10nm+ process.

This is an unexpected development as the company has yet to formally detail (let alone launch) the first 10nm Core architecture – Cannon Lake – and it's rare these days for Intel to talk more than a generation ahead in CPU architectures. Equally as interesting is the fact that Intel is calling Ice Lake the successor to their upcoming 8th generation Coffee Lake processors, which codename bingo aside, throws some confusion on where the 14nm Coffee Lake and 10nm Cannon Lake will eventually stand.

As a refresher, the last few generations of Core have been Sandy Bridge, Ivy Bridge, Broadwell, Haswell, Skylake, with Kaby Lake being the latest and was recently released at the top of the year. Kaby Lake is Intel’s third Core product produced using a 14nm lithography process, specifically the second-generation ’14 PLUS’ (or 14+) version of Intel's 14nm process.

Meanwhile when it comes to future products, back at CES Intel briefly showed a device based on post-Kaby Lake designs, called Cannon Lake and based on their 10nm process. Since then Intel has also confirmed that the 8th Generation of processors for desktops, called Coffee Lake, will be announced on August 21st (and we recently received promotional material to that effect). Ice Lake then, seems poised to follow both Coffee Lake and Cannon Lake, succeeding both architectures with a single architecture based on 10nm+.

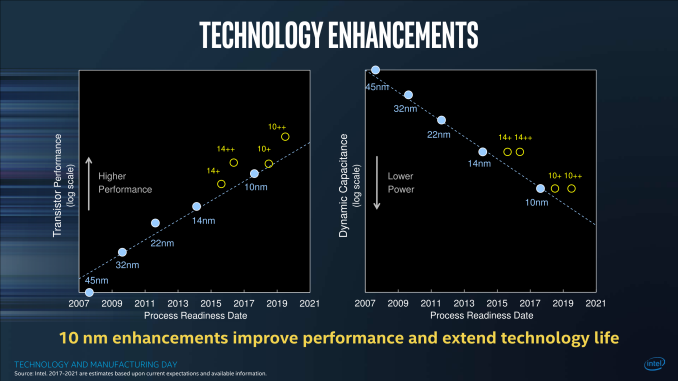

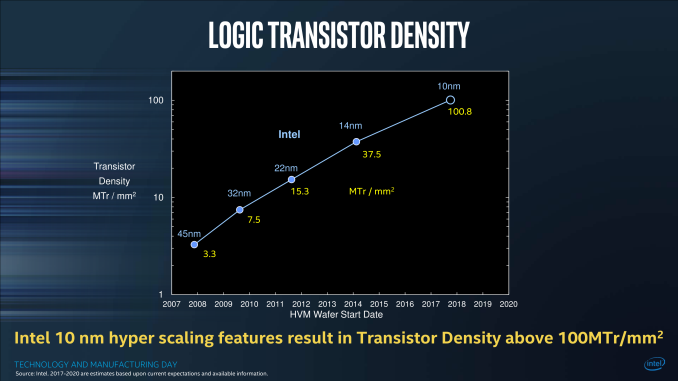

Working purely on lithographic nomenclature, Intel has three processes on 14nm: 14, 14+, and 14++. As shown to everyone at Intel’s Technology Manufacturing Day a couple of months ago, these will be followed by a trio of 10nm processes: 10nm, 10nm+ (10+), and 10++,

On the desktop, Core processors will go from 14 to 14+ to 14++, such that we move from Skylake to Kaby Lake to Coffee Lake. On the Laptop side, this goes from 14 to 14+ to 14++/10, such that we move from Skylake to Kaby Lake to Coffee Lake like the desktops, but also that at some time during the Coffee Lake generation, Cannon Lake will also be launched for laptops. The next node for both after this is 10+, which will be helmed by the Ice Lake architecture.

| Intel's Core Architecture Cadence | |||||

| Microarchitecture | Core Generation | Process Node | Release Year | ||

| Sandy Bridge | 2nd | 32nm | 2011 | ||

| Ivy Bridge | 3rd | 22nm | 2012 | ||

| Haswell | 4th | 22nm | 2013 | ||

| Broadwell | 5th | 14nm | 2014 | ||

| Skylake | 6th | 14nm | 2015 | ||

| Kaby Lake | 7th | 14nm+ | 2016 | ||

| Coffee Lake | 8th | 14nm++ | 2017 | ||

| Cannon Lake | 8th? | 10nm | 2018? | ||

| Ice Lake | 9th? | 10nm+ | 2018? | ||

The way that the desktop and laptop markets will be diverging then converging is confusing a lot of people. Why is the laptop market splitting between 14++ and 10, and why is the desktop market not going to 10nm but straight to 10+? What lies beyond is a miasma of guess work, leaked slides, and guessing Intel’s strategy, but I believe the answer lies in Intel’s manufacturing technologies and the ability to move to newer lithographic nodes.

(We should interject here that the naming of a lithographic node has slowly lost its relevance between the features of the process and the actual transistor density and performance, such that TSMC’s improved 16FF+ is called 12FFN, but relies on similar transistor sizes with enhanced attributes. But 12 is a smaller number than 14, which is the marketing angle kicking in. By all accounts, Intel has typically been considered the more accurate foundry when it comes to numerical lithographic naming of the process, which others consider is to their detriment.)

Intel originally predicted that they would move to 10nm almost a year ago, at the end of 2016 and 2 years after the launch of their 14nm process. But the challenge in managing the technology required to advance to their version of 10nm has been fraught with difficulty. In all cases it can depend on external equipment, fine tuning a process, or getting acceptable yields – while one manufacturer might be satisfied with an 80% yield, another might consider that a failure. Being able to obtain high yields (ramp up) will also be a function of die size, and so the newest nodes are typically launched with smaller mobile parts in mind first, as the yields for smaller parts are better than larger parts at the same defect rate.

Simply put, the first generation of 10nm requires small processors to ensure high yields. Intel seems to be putting the smaller die sizes (i.e. anything under 15W for a laptop) into the 10nm Cannon Lake bucket, while the larger 35W+ chips will be on 14++ Coffee Lake, a tried and tested sub-node for larger CPUs. While the desktop sits on 14++ for a bit longer, it gives time for Intel to further develop their 10nm fabrication abilities, leading to their 10+ process for larger chips by working their other large chip segments (FPGA, MIC) first.

From a manufacturing standpoint, Intel has been using multiple patterning techniques in its 14nm processes, and the industry is looking to when the transition to EUV will take place. Anton has some great writeups of the state of EUV and how different companies are transitioning to smaller nodes - they are well worth a read.

- EUV Lithography Makes Good Progress, Still Not Ready for Prime Time

- Samsung and TSMC Roadmaps: 8 and 6 nm Added, Looking at 22ULP and 12FFC

- GlobalFoundries Updates Roadmap: 7 nm in 2H 2018, EUV Sooner Than Later?

The crux of the matter is that EUV would shorten time to market and arguably make the process easier (if only more expensive), and several fab companies are waiting for Intel to jump onto it first. With EUV not ready, Intel has had to invest into deeper multi-patterning techniques, which raise costs, decrease yields, and increase wafer process times considerably.

All of which leads to a miasma of increased delays, much to the potential chagrin of investors but also customers who had banked on the power improvements that a typical new lithography node brings. Intel is still keeping spirits high, by producing numbers that would suggest that their methodology is still in tune with Moore’s Law, even if the products seem to be further strung out. Some analysts concur with Intel’s statements, while others see it as hand-waving until 10/10+ hits the market. Intel would also point out that it is developing other technologies such as Embedded Multi-Die Interconnect Bridges (EMIB) to assist in equipping chip with high-speed fabric or glue-logic.

Given its position as a post-8th gen architecture, Ice Lake is likely to hit sometime in 2018, perhaps 2019, depending on Intel’s rate of progress with larger chips and the 10+ process. Intel’s other market segments, such as FPGAs (Altera), Xeon Phi (MIC) and custom foundry partners, are also in the mix to get into some 10nm action.

(Note that Intel’s next generation of Xeon Scalable Processors is called Cascade Lake, a 2018 refresh of the Skylake generation launched this year.)

Source: Intel

106 Comments

View All Comments

TEAMSWITCHER - Tuesday, August 15, 2017 - link

This is going to be a great upgrade point... New MacBook Pro... New Windows Gaming Desktop... Both is 2018. Waiting is the hardest part.goatfajitas - Tuesday, August 15, 2017 - link

I wouldn't wait. Regardless of process shrink, each generation is 15-30% faster on Intel's selected and skewed benchmark and 3-10% faster in each product slot on most legitimate unbiased benchmarks. If you wait, there is always something better coming.Qwertilot - Tuesday, August 15, 2017 - link

NV GPU's are worth waiting for I guess - they're very predictable in their increase so you can work out ~roughly how much performance you want at a given price/power combination and what year you're likely to be able to get it.....bryanlarsen - Tuesday, August 15, 2017 - link

Nah. Pascal was a huge jump in performance, but it had a new process and a significantly updated architecture. In Intel terms, it was both tick & tock. Volta won't have a significantly new process, and it appears it will have less architectural changes too.In other words, it'll be less like Maxwell -> Pascal and more like Kepler -> Maxwell.

firerod1 - Tuesday, August 15, 2017 - link

Nvidia 6 series, 7 series were pretty much the same except for 780 ti/titan, and 9 series was only a 20-40% jump in performance where pascal was a >50% jump on several cards.niva - Wednesday, August 16, 2017 - link

Only 20-40% jump in performance. Spoiled brats.Santoval - Tuesday, August 15, 2017 - link

Since GV100 is the largest die of all time, with quite some margin over the second largest one, and since it is actually still at a ~15nm process (compared to TSMC's 16nm), a fully enabled die should be the greatest power hog of all time. That, of course, will be addressed by plenty of "dynamic on/off switching" (dark silicon), and by disabling the tensor cores and some CUDA (shader) blocks in the consumer versions. The fully enabled GV100 for professional cards should still be burning power like crazy though, and most likely require a liquid cooler. Unless TSMC did wonder with their 12FFN process.I am not sure if Nvidia plans to design smaller Volta GPUs for consumer cards. Perhaps that would be too expensive and not worth the bother. Or would it be more expensive to waste such large dies on consumer cards? In any case GV100 at that process node is at the maximum possible die size. It cannot get any bigger. Which is why Nvidia is thinking about taking the AMD CPU route and using 2+ dies on the same package. They will be forced to do that if by the time Volta's successor is to be developed (at the moment it should be at the preliminary design stage) a 7-10 nm process is not viable.

Santoval - Tuesday, August 15, 2017 - link

edit : "Unless TSMC did wonderS with their 12FFN process."StevoLincolnite - Wednesday, August 16, 2017 - link

I am still using Sandy Bridge-E from like 5-6 years ago.I just don't see a point in upgrading right now.

She can clock to 4.8ghz with the push of a button, 5ghz if I push the volts.

Which makes it very competitive with Ryzen's 6-core chips anyway.

With my 1866Mhz DDR3 modules, I have more bandwidth than Dual-Channel DDR4 3200Mhz.

Still runs every game and app I throw at it flawlessly.

Has PCI-3.0, USB 3.0.

Only thing that is a let down is that it only has Sata 6Gbit rather than 16Gbit.

And no USB 3.1, USB C. - But you can live without that, not worth the rush to upgrade.

Maybe in a year or two I can finally get a 10-12 core processor for a good price and my 3930K can finally be left to rest.

It's insane how long this rig has lasted me and it's still got a ton of life left in it.

philehidiot - Wednesday, August 16, 2017 - link

Aye, I'm on an older i5 set up and with all the hype you'd think I should be crying out for upgrading but simply put there's a reason new PC sales are stagnating and it's because there's just no need to upgrade. I'm on DDR3 with high performance modules like you and see no reason to shell out for DDR4 - I'm going to notice zero appreciable difference. As you say, missing out on the very latest USB standards and my older GTX 780 is now really the equivalent of a fairly mid range card (they say an RX 480 has about 125% of its performance) but that's a drop in upgrade I'll do when the time is right and certainly doesn't warrant a whole new system.For gaming I kind of blame the consoles for holding things back. With their hardware lifecycles lasting so long in comparison to PC GPUs it means devs just don't really seem to be creating stuff that really pushes the latest hardware as they're developing cross platform and the PC market is usually the smaller one, so why put extra effort into it? So we end up with cards with insane performance on the latest games with things like the 1080 and older cards choochin' along just fine for much longer. It's probably a similar story with CPUs - they can't make games which require masses of CPU horsepower to run well because that would compromise performance on consoles.